[Update 26Mar2019. See Updated section below.]

Note: This still works for XenServer 8.2

In the process of creating Virtual Machines (VMs) for labs for a customer, I needed to have 10VMs running at the same time. My XenServer host uses a 1.5TB local SATA drive for VM storage and an iSCSI storage server. I can’t use the storage server for these VMs as I will be taking my lab XenServer to the customer site and I don’t want to take two very heavy full tower servers. After getting the eighth VM running, my XenServer host was begging for mercy. The local SATA bus was being saturated with disk traffic. Since I need to have 10 VMs running I needed a solution fast. I ordered a Solid State Device (SSD) storage drive to put in the XenServer host. Since I am not a Linux geek, I decided to document what I had to do to make the SSD drive available for exclusive use by XenServer 5.6 SP2.

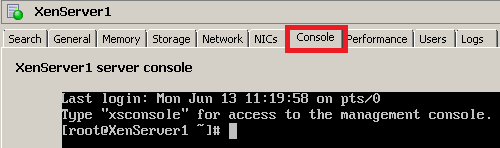

From XenCenter, click on the Console tab and press Enter (Figure 1).

Note: Thanks to Denis Gundarev for his help with the following Linux commands.

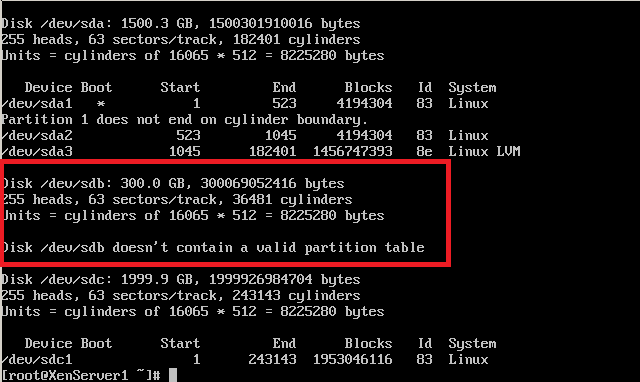

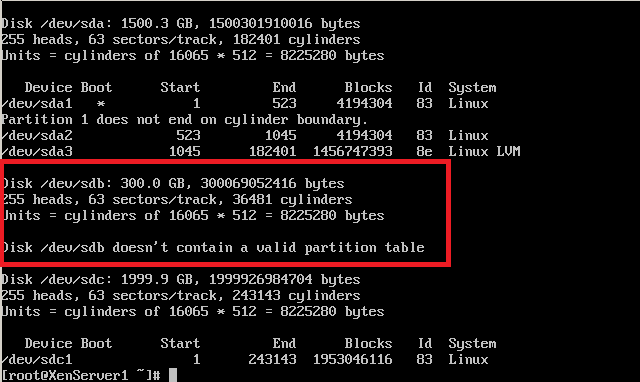

From the console prompt, type fdisk –l (that is a lower case letter “L”). This will list all the drives and partitions that XenServer sees (Figure 2).

Figure 2

My new SSD drive is shown as Disk /dev/sdb. The original drive where XenServer is installed shows as Disk /dev/sda. The external USB drive is 2TB and shows as Disk /dev/sdc . The following commands will use my drive’s /dev/sdb designation. The commands to type are in bold and comments about those commands are in square brackets [] following the commands. You should not type the comments.

[root@XenServer1 ~]# fdisk /dev/sdb

Device contains neither a valid DOS partition table nor Sun, SGI, or OSF disklabel

Building a new DOS disklabel. Changes will remain in memory only

until you decide to write them. After that, of course, the previous

content won’t be recoverable.

The number of cylinders for this disk is set to 36481.

There is nothing wrong with that, but this is larger than 1024,

and could in certain setups cause problems with:

1) software that runs at boot time (e.g., old versions of LILO)

2) booting and partitioning software from other OSs

(e.g., DOS FDISK, OS/2 FDISK)

Warning: invalid flag 0x0000 of partition table 4 will be corrected by w(rite)

Command (m for help): n [new partition]

Command action

e extended

p primary partition (1-4)

p [make the partition a primary partition]

Partition number (1-4): 1 [partition number 1]

First cylinder (1-36481, default 1):

Using default value 1

Last cylinder or +size or +sizeM or +sizeK (1-36481, default 36481):

Using default value 36481

Command (m for help): t [change file system type]

Selected partition 1

Hex code (type L to list codes): 83 [83 is the Linux file system]

Command (m for help): w [write partition table]

The partition table has been altered!

Calling ioctl() to re-read partition table.

Syncing disks.

[root@XenServer1 ~]# mkfs.ext3 /dev/sdb1 [format partition]

mke2fs 1.39 (29-May-2006)

Filesystem label=

OS type: Linux

Block size=4096 (log=2)

Fragment size=4096 (log=2)

36634624 inodes, 73258400 blocks

3662920 blocks (5.00%) reserved for the super user

First data block=0

Maximum filesystem blocks=0

2236 block groups

32768 blocks per group, 32768 fragments per group

16384 inodes per group

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912, 819200, 884736, 1605632, 2654208,

4096000, 7962624, 11239424, 20480000, 23887872, 71663616

Writing inode tables: done

Creating journal (32768 blocks): done

Writing superblocks and filesystem accounting information: done

This filesystem will be automatically checked every 25 mounts or

180 days, whichever comes first. Use tune2fs -c or -i to override.

[root@XenServer1 ~]# xe sr-create type=ext shared=false device-config:device=/dev/sdb1 name-label=SSD [create Storage Repository]

[Update 26Mar2019: In later versions of XenServer (I am using 7.6), there is an additional required parameter.

xe sr-create type=ext shared=false device-config:device=/dev/sdb1 name-label=SSD host-uuid=f87f5b5f-a079-444f-849a-1de9513a60e9

The host’s UUID is found on the General tab. You can right-click the UUID value and copy it to the clipboard.]

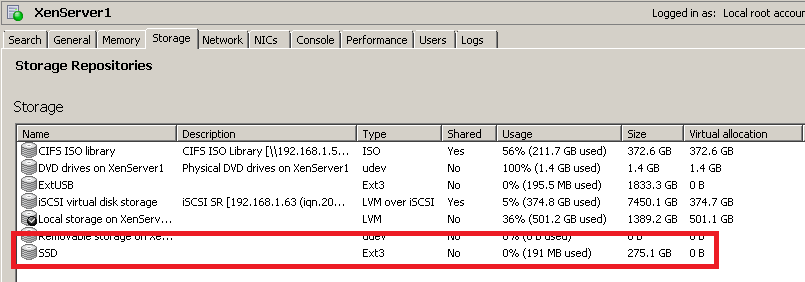

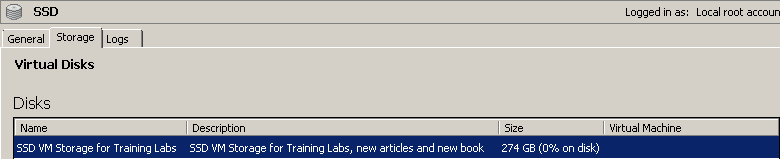

Once the Storage Repository (SR) is created, it is available in XenCenter in the Storage tab (Figure 3). Creating the new SR on the SSD drive took about 30 seconds.

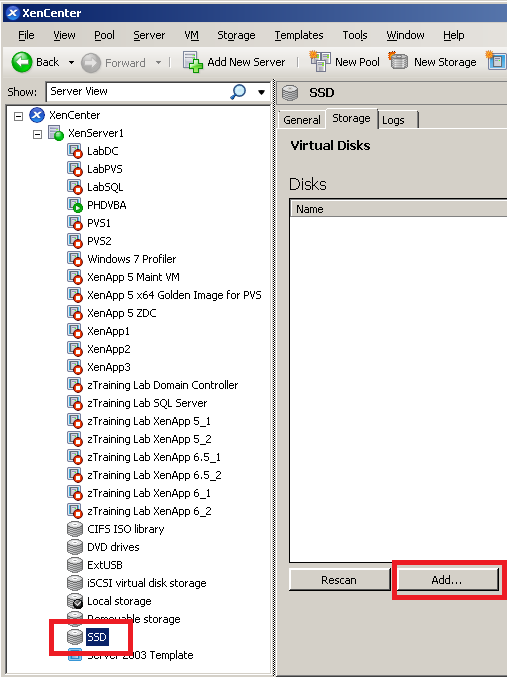

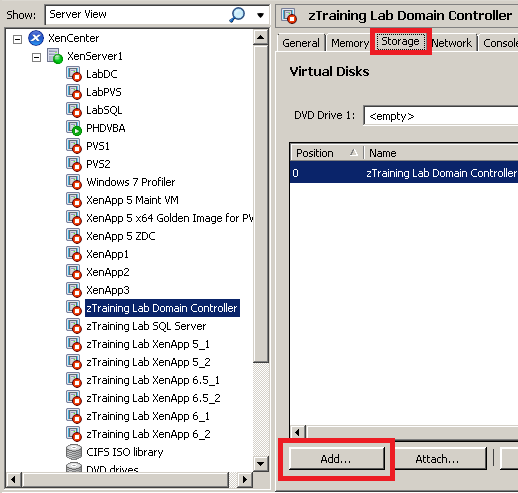

Select the SSD SR in the Server View and click Add… (Figure 4).

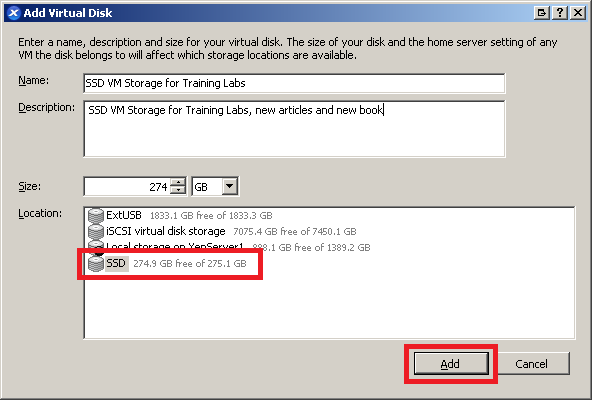

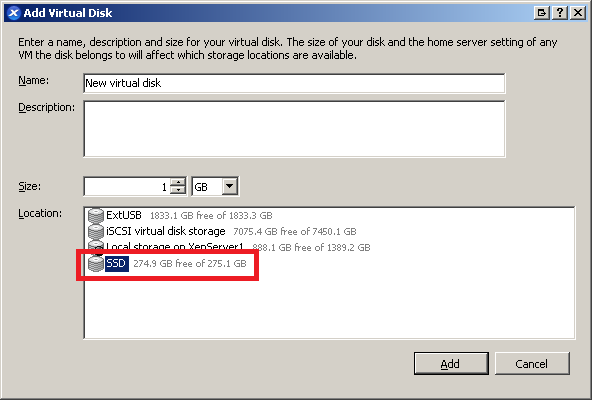

Enter a Name, Description, Size, select SSD and click Add (Figure 5).

The new Virtual Disk appears in XenCenter with no VM assigned (Figure 6).

To verify the new storage repository and its new virtual disk are available to a VM, select any VM, click the Storage tab and click Add… (Figure 7).

The SSD SR shows as available to add a new virtual disk to the selected VM (Figure 8).

Click Cancel. The new SSD-based storage repository and its new virtual disk are ready for use.

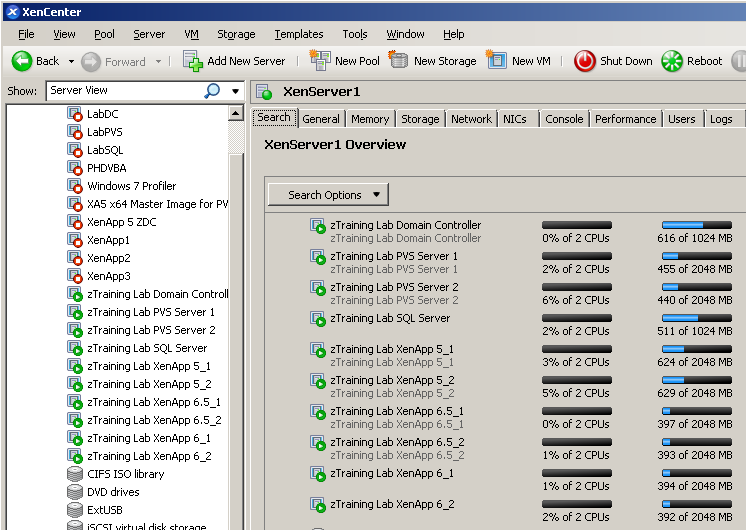

After adding the SSD drive, I was able to create the other two VMs needed for the labs (Figure 9). Now to test how much better performance for VM startup time is. I started the Domain Controller, waited until the log-in screen (87 seconds), started the SQL server, waited until the log-in screen (37 seconds), selected all eight remaining VMs, and clicked Start on the menu bar. 53-seconds later the last VM was at the login screen.

Thanks for this post, saves me every time I need to add a local SR.

I can confirm that this solution works with Xenserver 7.5.

Hi Carl,

Thank you for the article 🙂

I have a similar issues in my test lab, I have Intel NUC Skull Cayon with 2 512Gb Samsung SSD. I have tried to install MS Hyper-V but the OS could not see the local disks, so I tried XenServer 7.0 which did install fine but in XenCentre the disks are visible and I can not add any SR.

I came across your article late last night but was too tired to try the fix(fdisk –l) when I do I will let you know if this works or not…:)